According to this study, X's algorithm favors conservative content.

Since Elon Musk's acquisition of X in 2022, the platform has been regularly accused of altering the balance of public debate.

A new scientific study published in Nature provides data that fuels this controversy. According to its authors, the algorithmic functioning of the "For You" feed tends to expose users more to conservative content.

This conclusion is not entirely new, but this time it is based on a large-scale methodology. This raises questions about the role of algorithms in shaping political opinions, especially given the prominent role of social media in accessing information…

A seven-week experiment

Does X influence your opinion?

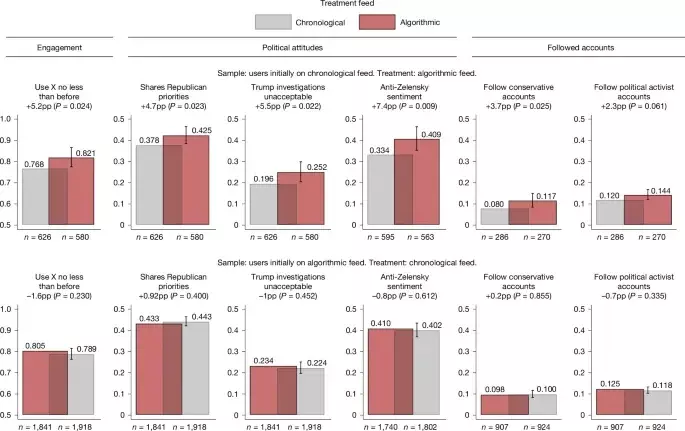

Source: NatureIn this 2023 study of nearly 5,000 active US users of X, researchers from institutions including the Paris School of Economics, the University of St. Gallen, and Bocconi University divided participants into two groups for seven weeks.

Some were restricted to exclusively using the chronological feed, which only displays posts from followed accounts, while others switched to the algorithmic feed, "For You," which is now enabled by default.

The results show that users exposed to the algorithmic feed shifted toward more conservative positions on several political issues. Researchers indicate that publications from traditional media appeared 58% less frequently in the algorithmic feed, while content from political activists was 27% more present. Among the measured effects, the study notes a 7.4 percentage point decrease in favorable opinion of Volodymyr Zelensky among participants who switched from the chronological feed to the algorithmic feed, and perceptions related to the legal proceedings against Donald Trump or to political priorities were also affected. A structural bias or an engagement effect? The findings of this study echo other investigations, including a recent one by Sky News, which already suggested an algorithmic bias favoring content on the right of the political spectrum. However, the researchers urge caution, as the experiment focuses on one country, one time period, and one A specific panel of users was paid to comply with the guidelines. Therefore, the results cannot be automatically extrapolated to all global usage.

Nevertheless, the question of the power of recommendation systems remains central, because participants exposed to the algorithmic feed spent more time on the platform, which confirms the model's effectiveness in terms of engagement. It is precisely this logic of attention optimization that fuels criticism surrounding "filter bubbles" and polarization.

While X claims an approach focused on freedom of expression, this study illustrates how the technical architecture of a social network can, sometimes subtly, influence the dynamics of public debate…

Comments